The Randomisation Ideal and Its Limits

Randomised controlled trials (RCTs) are often described as the gold standard of impact evaluation. By randomly assigning participants to treatment and control groups, they eliminate selection bias and provide clean estimates of causal effects. Yet in the messy reality of South Asian development, randomisation is frequently impossible, impractical, or unethical.

Consider a state government rolling out a new nutrition programme across all districts simultaneously — there is no control group. Or an NGO working with trafficking survivors — randomly denying services to half the participants raises profound ethical concerns. Or a community-driven development programme where villages self-select into participation — the very act of volunteering signals motivation that confounds any comparison. In these situations, quasi-experimental designs offer rigorous alternatives that can still generate credible evidence of programme impact.

These methods do not eliminate all threats to internal validity, but when applied carefully with transparent assumptions, they provide far stronger evidence than simple before-after comparisons or cross-sectional analyses. For evaluation practitioners in South Asia, mastering these tools is not optional — it is essential for building an evidence base that policymakers can trust.

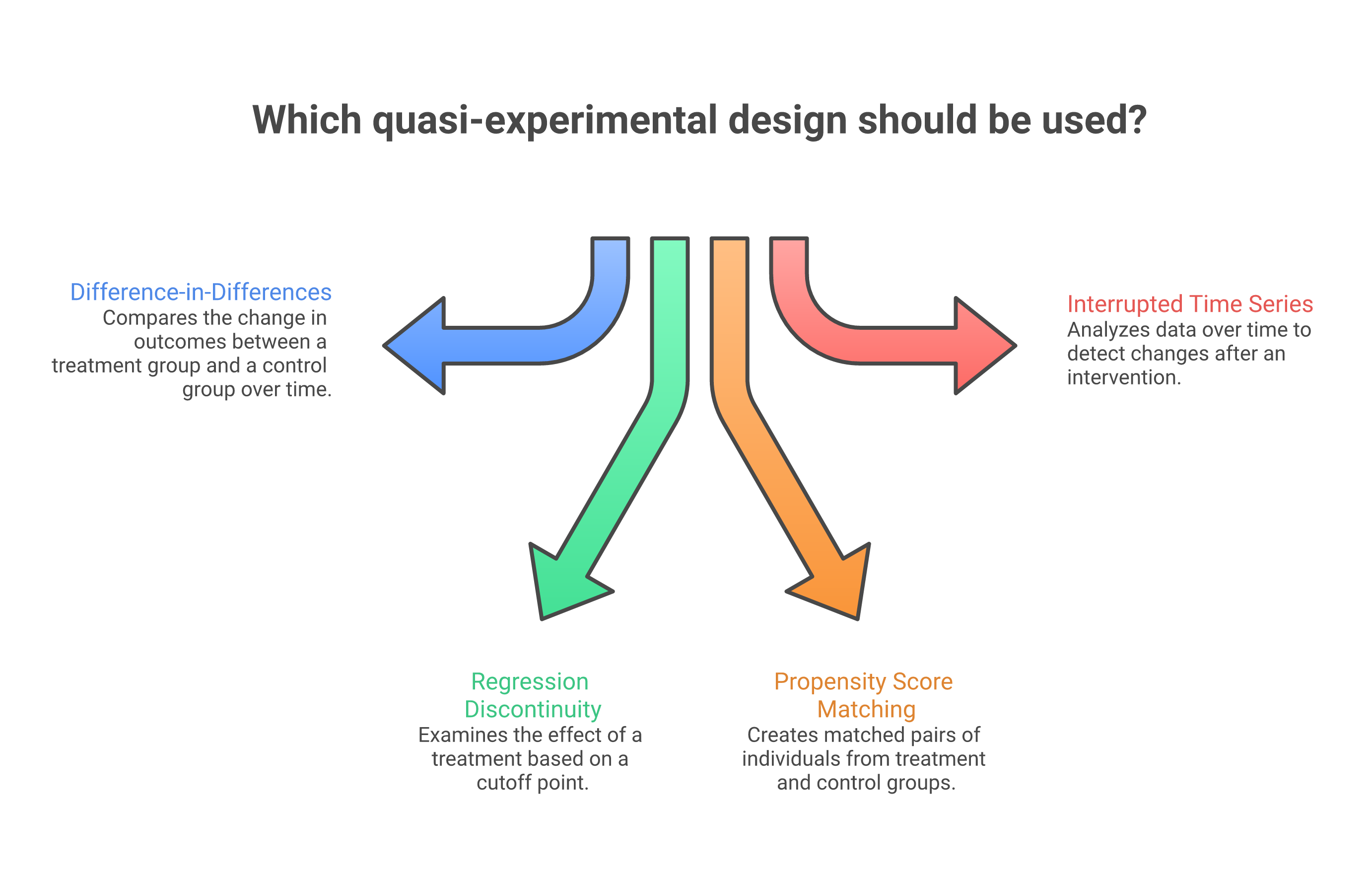

Difference-in-Differences (DiD)

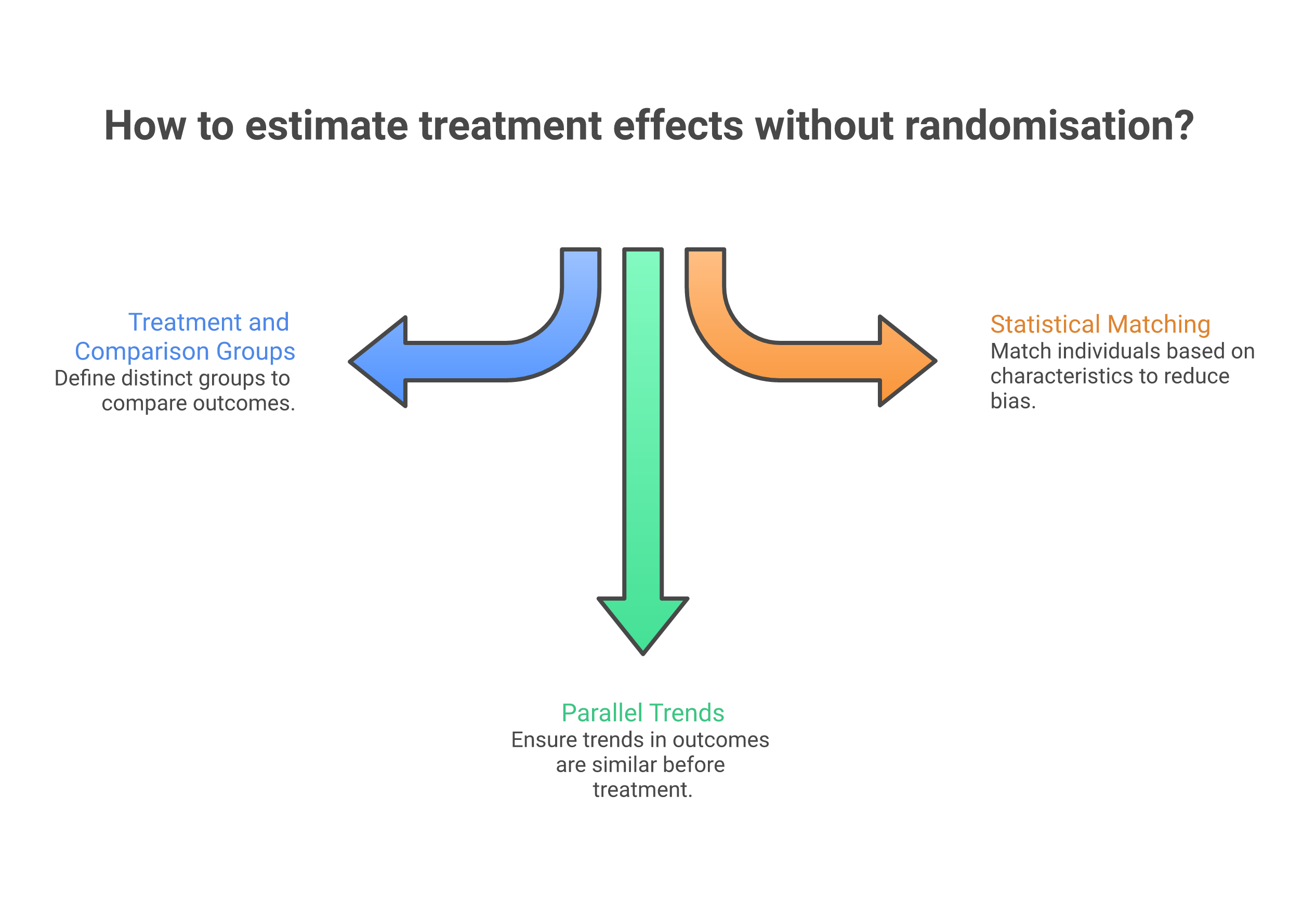

Difference-in-differences is perhaps the most widely used quasi-experimental method in development evaluation. The intuition is elegant: compare the change over time in the treatment group with the change over time in a comparison group. The "double difference" removes both time-invariant differences between groups and common temporal trends.

The critical assumption is the parallel trends assumption — that in the absence of the intervention, both groups would have followed the same trajectory. This assumption cannot be directly tested for the post-treatment period, but evaluators can examine whether pre-treatment trends were parallel. If treatment and comparison districts showed similar trends in child malnutrition rates for five years before the nutrition programme launched, it is more credible to attribute post-programme divergence to the intervention.

In India, DiD has been used extensively to evaluate policy reforms. A notable example is the evaluation of the Mahatma Gandhi National Rural Employment Guarantee Act (MGNREGA), where researchers compared districts that received the programme in Phase 1 (2006) with districts that received it later, using the staggered rollout as a natural experiment. The analysis found significant effects on agricultural wages and consumption expenditure in early-implementation districts.

Regression Discontinuity Design (RDD)

Regression discontinuity exploits situations where programme eligibility is determined by a threshold on a continuous variable. Households below a poverty score receive benefits; those above do not. Students scoring above a cutoff receive scholarships; those below miss out. The key insight is that individuals just above and just below the threshold are essentially similar — any discontinuity in outcomes at the cutoff — measured through carefully chosen indicators — can be attributed to the programme.

RDD has been applied powerfully in the Indian context. The evaluation of the Rashtriya Madhyamik Shiksha Abhiyan (RMSA) secondary education programme used district-level eligibility criteria based on gross enrolment ratios. Districts just below the eligibility threshold received programme resources while otherwise similar districts just above the threshold did not. This enabled credible estimation of programme effects on school infrastructure and enrolment.

"Quasi-experimental methods do not require us to abandon the quest for causal inference — they require us to be more creative, more transparent, and more honest about our assumptions." — Martin Ravallion

Propensity Score Matching (PSM)

When neither a natural experiment nor a threshold exists, propensity score matching offers another path to credible comparison. PSM constructs a statistical comparison group by matching programme participants with non-participants who have similar observable characteristics — age, income, education, location, household size, and so on. The "propensity score" is the predicted probability of participation based on these characteristics, and matching is done on this single score rather than on each variable individually.

The critical limitation is that PSM can only account for observable differences. If unobserved factors — motivation, social networks, political connections — drive both participation and outcomes, PSM estimates will be biased. This is known as "selection on unobservables" and is the Achilles heel of matching methods. In the South Asian context, where social capital and caste networks often determine programme access, this limitation must be taken seriously.

Despite this caveat, PSM has proven valuable in evaluating programmes like microfinance interventions in Bangladesh and self-help group programmes in Andhra Pradesh, where rich baseline surveys provided extensive covariates for matching. Understanding why sample size matters and getting the sample size right is critical for the power of these analyses. The key is to combine PSM with sensitivity analyses that assess how large unobserved biases would need to be to overturn the findings.

Interrupted Time Series (ITS)

Interrupted time series analysis examines whether a policy change or programme launch altered the level or trend of an outcome measured repeatedly over time. Unlike DiD, ITS does not require a comparison group — it uses the pre-intervention trend as the counterfactual. This makes it particularly useful for evaluating national policies that affect everyone simultaneously.

In South Asia, ITS has been applied to evaluate the impact of India's Swachh Bharat Mission on sanitation outcomes, using district-level monthly data on toilet construction and open defecation rates. The analysis examined whether the campaign's launch in 2014 produced a discontinuous jump in sanitation indicators beyond the pre-existing trend. Similar approaches have evaluated Bangladesh's tobacco control legislation and Sri Lanka's post-tsunami reconstruction policies.

The main threat to ITS validity is co-occurring events. If another programme launched at the same time, or if an economic shock coincided with the intervention, attributing the change solely to the programme becomes problematic. Adding a comparison series — a similar outcome that should not have been affected by the intervention — strengthens the design considerably.

Strengthening Quasi-Experimental Evidence

No single quasi-experimental method provides the same internal validity as a well-implemented RCT. However, several strategies can strengthen the credibility of quasi-experimental findings. Triangulation across methods — applying DiD and PSM to the same programme and checking whether results converge — builds confidence, echoing the broader case for mixed methods in evaluation. Placebo tests — checking whether the intervention appears to affect outcomes it should not influence — can rule out spurious findings. Sensitivity analyses — examining how robust results are to different specifications, matching algorithms, or bandwidth choices — demonstrate transparency.

For the South Asian evaluator, the practical message is clear: quasi-experimental methods are not second-best alternatives to RCTs. They are appropriate tools for the vast majority of real-world evaluation situations. The key is to choose the right design for your context, be transparent about assumptions, and invest in the data quality that these methods demand. A well-executed DiD or RDD study with transparent assumptions will always be more useful than a poorly implemented RCT with high attrition and contamination.