The acronym MEAL—Monitoring, Evaluation, Accountability, and Learning—is so commonplace in the development sector today that it is easy to forget how recently it emerged. The journey from simple project monitoring to the integrated framework we use now reflects decades of hard-won lessons about what it takes to create lasting change.

The Early Days: Monitoring as Bookkeeping (1960s–1970s)

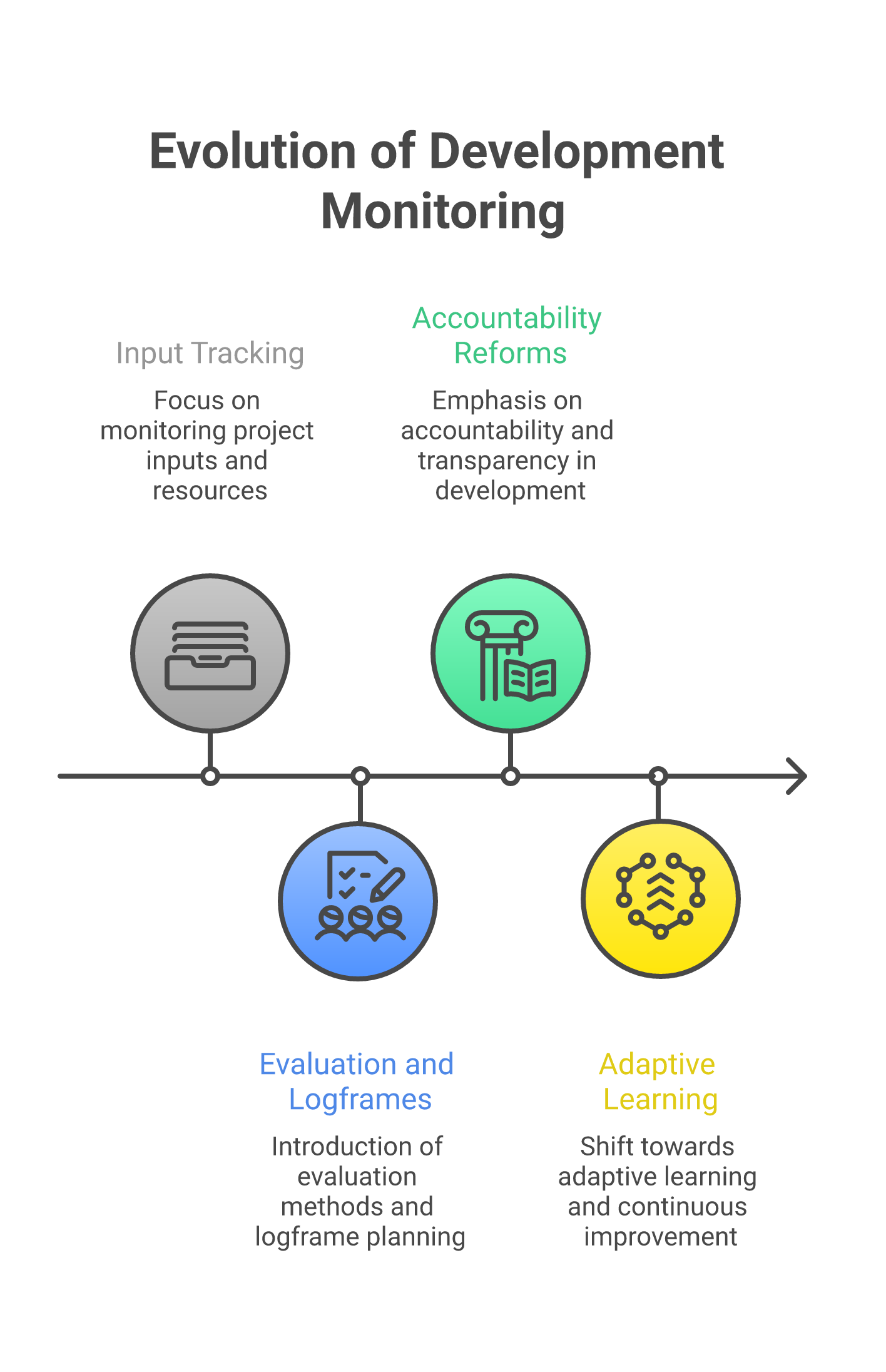

In the early decades of international development, monitoring meant tracking money. Did the funds arrive? Were they spent as planned? Colonial-era and post-independence development programmes focused on input tracking—how many wells built, how many schools constructed, how many tonnes of grain distributed.

This approach treated development as an engineering problem: design the intervention, allocate resources, deliver outputs. The assumption was that if inputs were correct, outcomes would follow automatically. There was little systematic effort to check whether this assumption held.

The Rise of Evaluation (1980s–1990s)

The 1980s brought a fundamental shift. Donors and governments began asking not just "what did we do?" but "did it work?" The logical framework (logframe), which originated from systems analysis and planning approaches in the U.S. defense sector, was formalised for development use by USAID through a contract with Practical Concepts Inc. in the late 1960s. By the 1980s it had gained widespread adoption as a planning and monitoring tool.

This era saw the rise of impact evaluation as a discipline. Randomised controlled trials (RCTs), long established in medical research, began appearing in development contexts. The World Bank and bilateral donors invested heavily in evaluation departments. The OECD Development Assistance Committee (DAC) published its influential evaluation criteria in 1991—relevance, effectiveness, efficiency, impact, and sustainability—which remain the benchmark today.

"The logframe gave us structure. But structure without learning is just bureaucracy with better formatting."

Yet evaluation remained largely an external exercise. Consultants arrived at programme end, produced reports that few read, and departed. The gap between evaluation findings and programme improvement was enormous.

The Accountability Turn (2000s)

The early 2000s brought accountability into sharp focus. The 2004 Indian Ocean tsunami and subsequent humanitarian response revealed systematic failures in how aid organisations answered to affected communities. The Humanitarian Accountability Partnership (HAP) was established in 2003. The Sphere Standards, first published in 2000, codified minimum standards for humanitarian response.

The Paris Declaration on Aid Effectiveness (2005) and the Accra Agenda for Action (2008) pushed accountability further—not just downward to communities but also among development partners. The concept of mutual accountability entered the vocabulary.

This period transformed M&E into MEA—Monitoring, Evaluation, and Accountability. Organisations began establishing complaint mechanisms, feedback loops, and transparency standards. The acronym had its first expansion.

The Learning Revolution (2010s)

The final piece—Learning—emerged from frustration. Despite decades of monitoring and evaluation, the development sector kept repeating the same mistakes. Evaluation reports gathered dust. Monitoring data sat in spreadsheets that nobody analysed. The problem was not information deficit but learning deficit.

The adaptive management movement, championed by organisations like DFID (now FCDO), USAID through its Collaborating, Learning, and Adapting framework, and various think tanks, argued that programmes needed to be designed for learning, not just measurement.

Key Milestones in MEL Evolution

- 1969: USAID formalises the Logical Framework (rooted in defense sector systems analysis)

- 1991: OECD DAC publishes evaluation criteria

- 2000: Sphere Standards first edition

- 2003: HAP established for humanitarian accountability

- 2005: Paris Declaration on Aid Effectiveness

- 2010s: Adaptive management and CLA frameworks

- 2019: OECD DAC criteria updated (adds coherence)

MEAL in South Asia Today

In South Asia, the MEAL journey has its own character. India's National Rural Livelihoods Mission (NRLM) represents one of the largest community-driven M&E systems in the world, with self-help groups conducting their own monitoring. Bangladesh's development sector, shaped by organisations like BRAC, pioneered approaches that blur the line between programme management and evaluation.

The region faces distinct challenges: the sheer scale of programmes, linguistic diversity that complicates data quality in the field, and the tension between donor-driven accountability frameworks and locally meaningful learning processes.

What the History Teaches Us

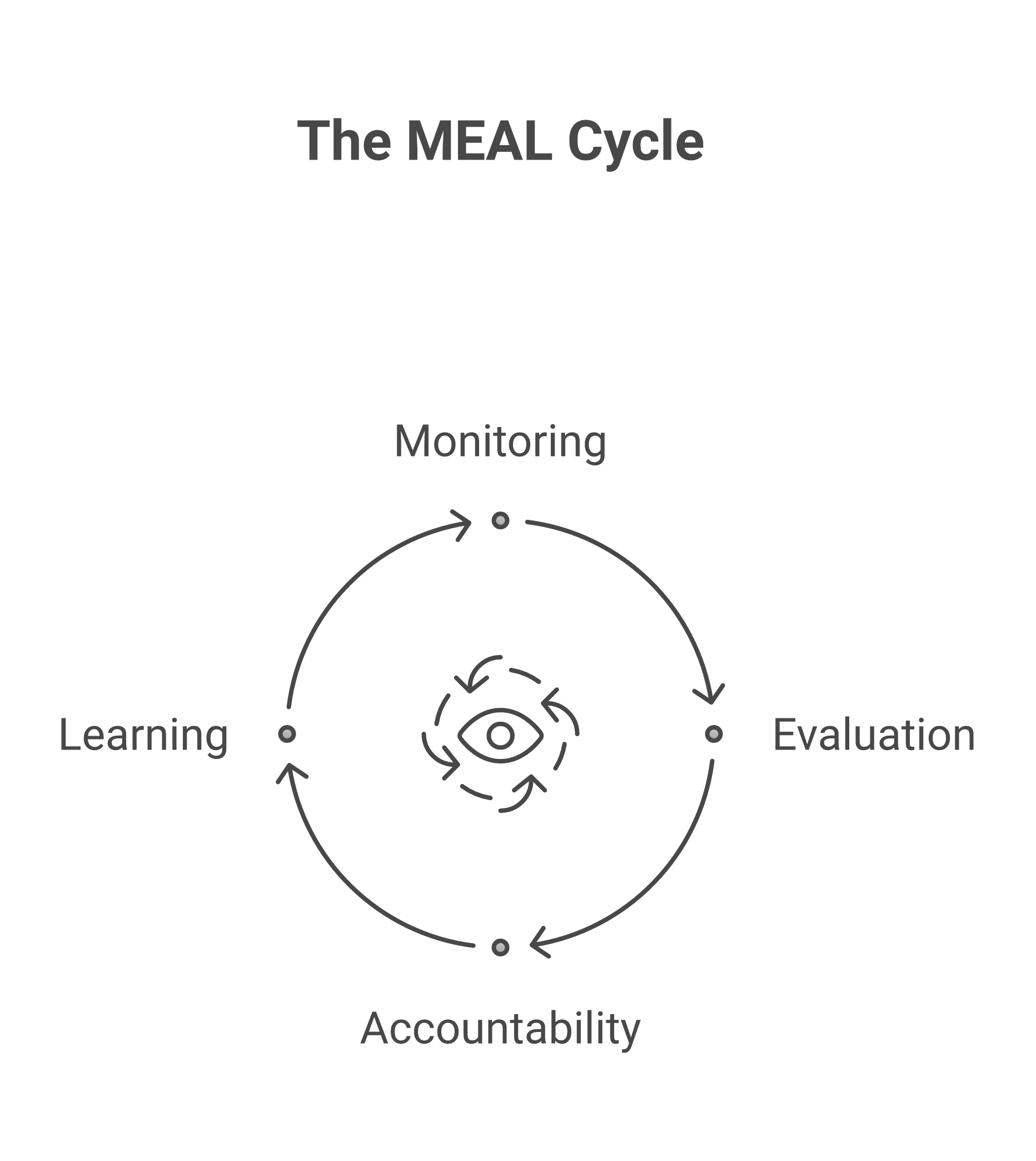

Each addition to the acronym—from M to M&E to MEL to MEAL—reflects a genuine learning about what was missing. Monitoring without evaluation is activity without assessment. Evaluation without accountability is assessment without answerability. And all of it without learning is measurement without meaning. Getting the fundamentals right—starting with designing indicators that actually matter—is where good practice begins.

The challenge for today's practitioners is not to treat MEAL as four separate boxes to tick, but as an integrated system where each component strengthens the others. That is the vision ImpactMojo's courses and labs are designed to build.